- Jan 12, 2026

The Silent Efficiency Problem: Debugging Multi-Agent AI Systems

- Teddy Kim

- 0 comments

Three pull requests. Same root cause. Zero complaints.

That's the moment I realized my multi-agent system had been silently burning cycles for days. The agents were doing exactly what I asked them to do. They executed their protocols, created PRs, received feedback, and tried again. Rinse and repeat. The code worked. The tests ran. But the system was fundamentally broken.

Here's the thing about agents: they don't complain. A junior engineer might come to you after the second rejection and say "I keep making the same mistake." An agent just keeps executing. Silently. Efficiently. Amplifying waste at machine speed.

The Pattern Nobody Saw

I run a multi-agent development system with two primary agents: va-worker (implements features from GitHub tickets) and va-reviewer (reviews PRs before human merge). The workflow is simple. Worker creates PR, reviewer checks it, human merges if approved.

Except the human wasn't getting many PRs to merge.

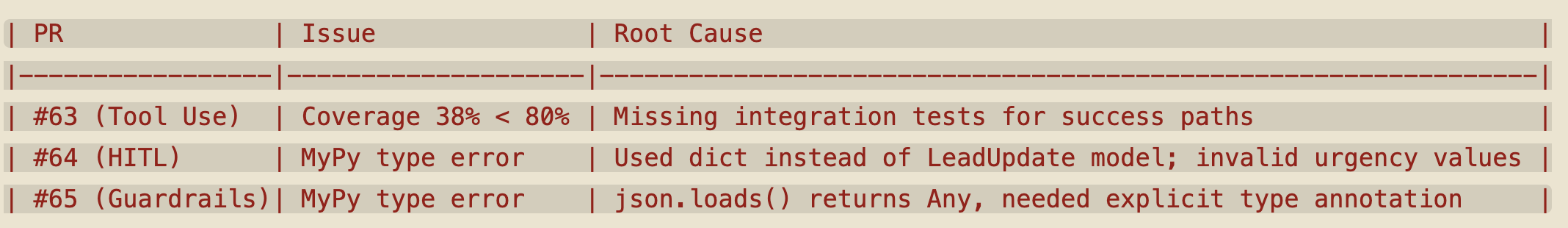

Looking at the recent PR history, I saw this:

Waste Doesn't Go Away

If you've studied Lean manufacturing, you know about the Seven Wastes. Two of them are particularly relevant here:

Handoff waste happens when work moves between people or systems. Every time va-worker creates a PR that va-reviewer rejects, that's a failed handoff. The work has to go back, get reworked, and attempt the handoff again.

Defect waste happens when bugs are caught late instead of early. In this case, type errors and coverage gaps were being caught at PR review time, not during development.

The insight that took me longer than it should have: agents don't eliminate waste. They can amplify it.

Traditional debugging doesn't apply here. The code isn't broken. The worker agent can write perfectly functional Python. The reviewer agent can correctly identify type errors. Both agents are working exactly as designed.

The system is what's broken.

Your Job Isn't Code Review

This is where most people get human-in-the-loop supervision wrong. They think their job is to review the agent's output, like a senior engineer reviewing a junior's code.

That's not it.

Your job is to calibrate the delivery system. You're not reviewing code. You're reviewing system dynamics. You're looking for patterns across handoffs that indicate the system is miscalibrated.

When you see the same failure repeat, that's not a code problem. That's a system design problem.

Agent Self-Repair

Here's where it gets interesting. Rather than me manually fixing the protocol, I asked the agent to analyze its own failure pattern.

I gave it a simple prompt: "There seems to be a pattern where va-worker creates a pull request and va-reviewer pushes back because of similar root causes. Let me analyze the recent PR history to identify the pattern and suggest improvements to the workflow."

The agent read through its recent PRs and came back with this analysis:

Root Causes Identified:

1. Schema/Model Ignorance - Worker doesn't read src/models/database.py before implementing code that uses those models

2. Missing Quality Gates - Worker doesn't run mypy before creating PR

3. Coverage Verification Gap - Worker runs tests but doesn't verify per-module coverage

Proposed Protocol Changes for github-ticket-worker:

Add Pre-Implementation Research phase (MANDATORY):

- Read src/models/database.py first to understand Literal types, field constraints

- Check method signatures in src/integrations/ to know expected parameter types

- Identify which Pydantic models the code will use

Add Quality Gate Checklist (MANDATORY before PR):

- Run mypy with zero errors required

- Run ruff check with fixes applied

- Run pytest with 80%+ coverage requirement

- Verify NEW module coverage is at least 80%a

Add Common Pitfalls section with examples from actual failures.The agent then updated its own protocol definition and created PR #66. The reviewer approved it.

Calibration Principles

After watching this play out, here's what I learned about calibrating multi-agent systems:

Watch for repeated handoff failures. One PR rejection is normal. Three consecutive rejections for similar reasons means your system is miscalibrated.

Make quality gates explicit and automated. The worker agent was running tests, but it didn't know to check coverage thresholds. Type checking wasn't in the protocol at all. You can't assume agents will infer quality standards from context.

Build feedback loops into protocols. The original worker protocol had no research phase. It jumped straight from reading the ticket to writing code. The revised protocol forces the agent to read schema definitions first. This is a forcing function that prevents an entire class of failures.

Trust but verify. Even after the protocol update, I still watch for patterns. The agents are more reliable now, but they can still drift. Calibration isn't one-and-done. It's continuous.

The Silent Efficiency Problem

The scariest thing about agent systems is that inefficiency doesn't announce itself. In a human team, you'd see frustration. Longer standups. Complaints in Slack. Decreased morale. These are signals that something is wrong.

Agents give you none of that. They just execute. The only signal you get is output rate. If you're not watching carefully, you won't notice that your "efficient" system has been burning cycles on the same failure loop for days.

This is why treating agents like autonomous workers is a mistake. They're more like a production line. And your job isn't to inspect each widget coming off the line. Your job is to watch the line itself for inefficiencies, bottlenecks, and quality drift.

When you see a pattern, you don't fix the widget. You calibrate the system.

---

If you're building with AI agents and want to understand how to integrate them into real development workflows, I put together a free study guide that covers practical patterns for reading codebases, understanding system architecture, and making sense of complex software projects. Grab it here