- Jan 28, 2026

There Are No Wrong Jobs: Why Career Pivots Are AI Superpowers

- Teddy Kim

- 0 comments

I've held six or seven different jobs in twenty five years. Every transition felt like a better fit. Product manager to architect. Architect to lead engineer. Lead engineer to director. Each pivot came at a point when I realized, "I'm in the wrong job."

But here's what I've realized: those "wrong" jobs gave me something specialists never get. They gave me perspective across the entire value chain. And in a world where AI amplifies whatever context you bring, that accumulated perspective isn't a liability. It's a superpower.

The perspective problem

In a previous post, I introduced Kim's Law:

The quality we give to customers cannot exceed the quality we give each other.

That means internal deliverable quality sets the ceiling for everything downstream.

But here's the uncomfortable truth: most people can't adjudicate whether an internal deliverable is good or bad. How could you unless you've done all the jobs?

To spot a weak PRD, you need to have written PRDs. To recognize a fragile technical plan, you need to have architected systems. To know when a backlog is setting up a team for failure, you need to have been the one responsible for velocity and work-in-progress.

A handful of people have held all these roles. At some point, each job became "the wrong job"—so they transitioned. But that trail of wrong jobs? That's actually a matrix of mental models. And those mental models compound.

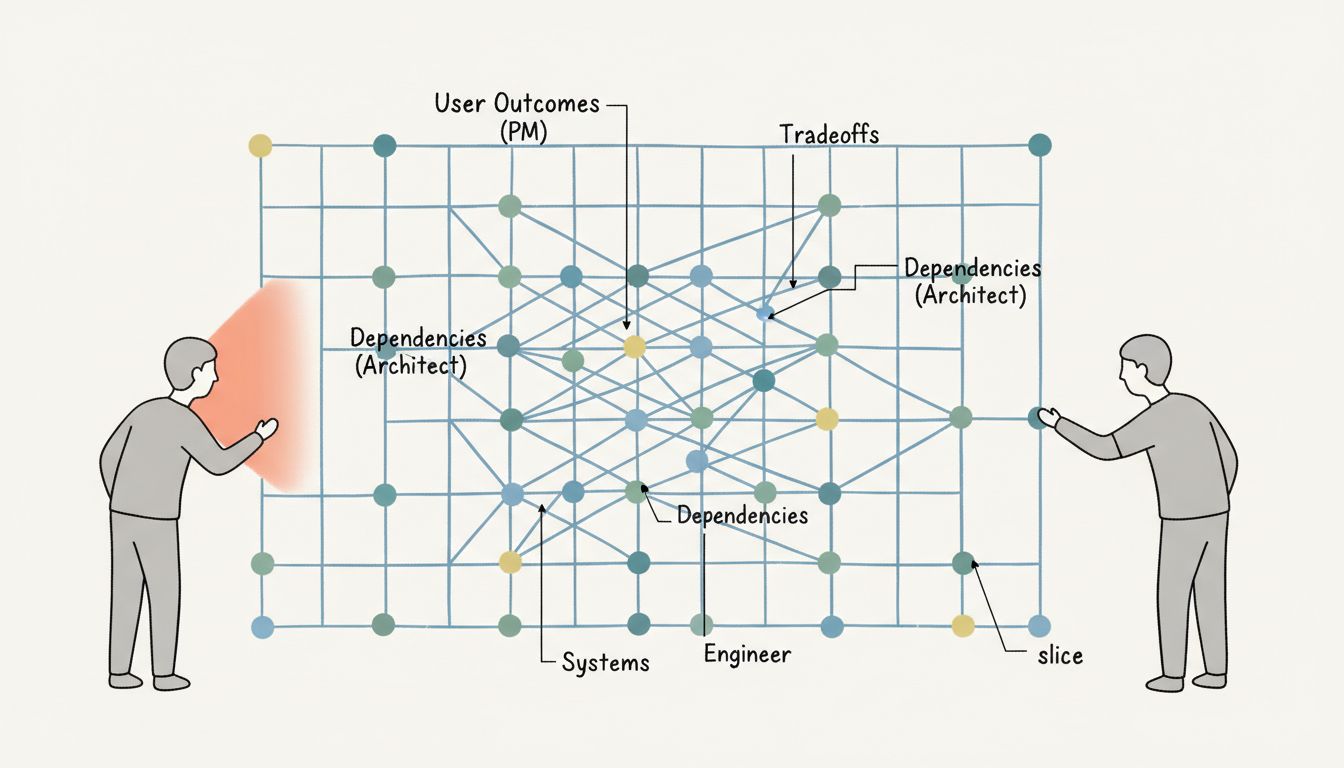

The quality chain is a chain of handoffs

Value delivery isn't a straight line. It's a series of handoffs, each one a potential weak link.

PRD gets handed to architecture. Architecture gets handed to backlog. Backlog gets handed to implementation. Implementation gets handed to QA. QA gets handed to deployment. Deployment gets handed to operations.

Every handoff is an opportunity for quality to degrade. And the insidious thing is that degradation happens in the gaps between roles. The architect doesn't realize the PRD is ambiguous until they try to design around it. The lead engineer doesn't realize the architecture is fragile until they try to break it into tasks. The QA engineer doesn't realize the implementation is untestable until they try to test it.

These problems live in the seams. And you can only see them if you've been on both sides of the seam.

I've been on both sides of every one of those handoffs. Not because I planned it. Because each role eventually felt limiting, and I moved on. What felt like restlessness was actually reconnaissance.

The Munger model

Charlie Munger talks about building a "latticework of mental models." The idea is simple: the more lenses you have, the better you see reality. Physics gives you a lens. Psychology gives you a lens. Economics gives you a lens. Each lens reveals something the others miss.

Most people apply this to reading books. But Munger actually lived it. He was a lawyer before he was an investor. The legal training gave him a mental model for contracts, incentives, and human nature that pure finance people lack.

The zigzag career works the same way. Every role you've held is a mental model you can apply to every problem you encounter. Product management taught you to think in user outcomes. Architecture taught you to think in tradeoffs and constraints. Engineering leadership taught you to think in dependencies and capacity. Each "wrong" job added a lens.

The specialists around you see one slice. You see the whole thing.

AI as force multiplier

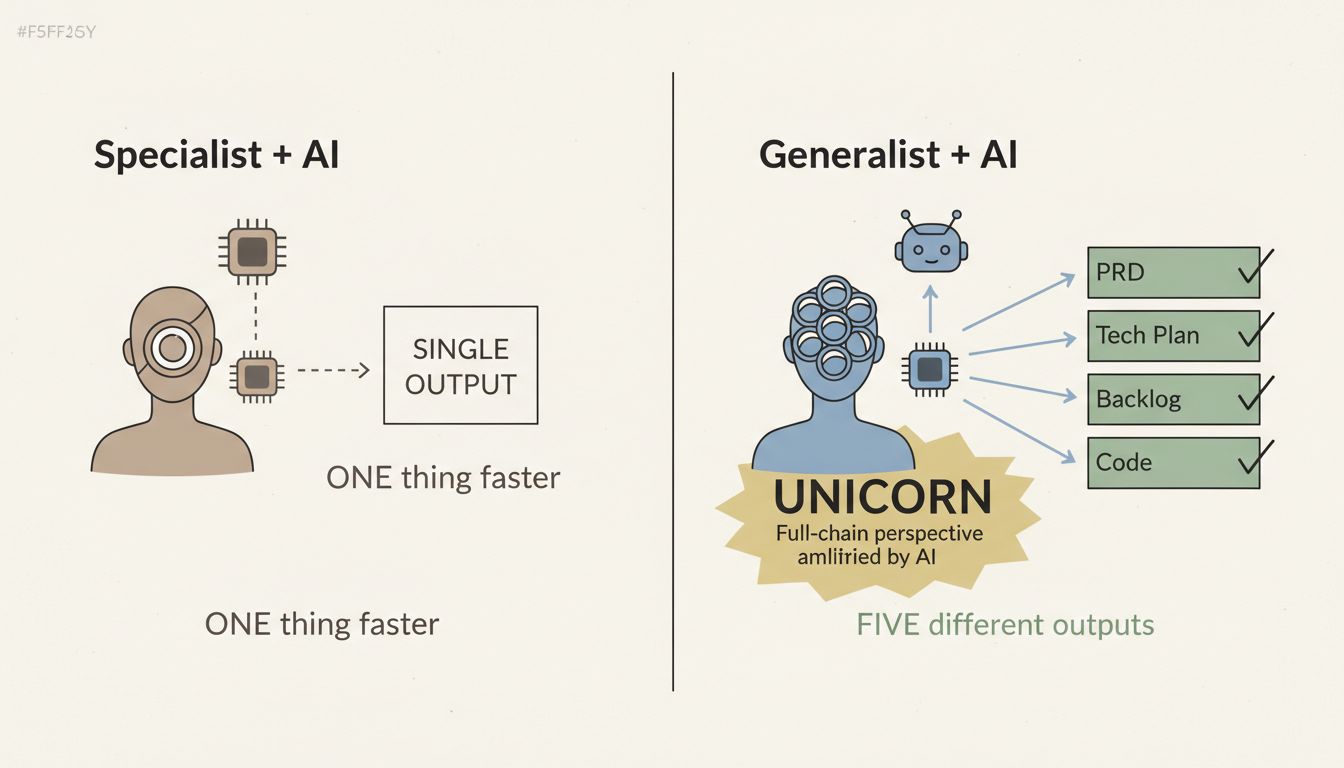

Here's where it gets interesting. AI doesn't replace perspective. It amplifies whatever perspective you bring.

Hand an LLM to someone who's only ever been an engineer, and they'll use it to write code faster. Hand the same LLM to someone who's been a PM, an architect, and an engineer, and they'll use it to generate PRDs, technical plans, and implementation—and they'll know when each output is garbage because they've done those jobs themselves.

The person with accumulated context can prompt the AI across the entire value chain. The specialist can only prompt it in their lane.

This is why the "unicorn" narrative is wrong. The unicorn isn't someone with superhuman abilities in one domain. The unicorn is someone with accumulated perspective across many domains, force-multiplied by AI. One person with full-chain context can now do what used to require a coordinated team.

And the fuel for that leverage? A career of "wrong" jobs.

The future is singular

Organizations are figuring this out, slowly. The traditional model—specialists coordinated by generalists—doesn't scale when AI can handle the coordination. What scales is individuals with enough perspective to see the whole system, using AI to execute across every layer.

This is terrifying if you spent your career going deep in one area. It's liberating if you spent your career zigzagging and wondering if you'd ever find your lane.

Your lane is the whole chain.

The face rakes that trip up specialists? You can see them coming because you've been on both sides. The quality problems that hide in the seams? You can spot them because you've sewn those seams yourself. The internal deliverables that set up downstream teams for failure? You can fix them at the source because you understand the source.

There are no wrong jobs. Every role you've held is a lens. Every transition you regretted was reconnaissance. The accumulated perspective is the point.

The punchline

If your career feels like a series of wrong turns, consider the alternative framing: you've been building a latticework of mental models that most specialists never acquire.

Kim's Law says the quality you deliver to customers cannot exceed the quality you give each other. But to deliver quality across the chain, you have to see the chain. And to see the chain, you have to have worked the chain.

The zigzag career isn't a bug. It's the training data.

If you want to go deeper on leveraging AI with accumulated perspective, I put together a free study guide that covers the fundamentals of AI-assisted development. It's the resource I wish I had when I started connecting these dots. Get the AI Study Guide